Two years into the enterprise AI rollout, most organizations still cannot answer a basic question. Which of their data is allowed to be processed by an LLM, and which is not?

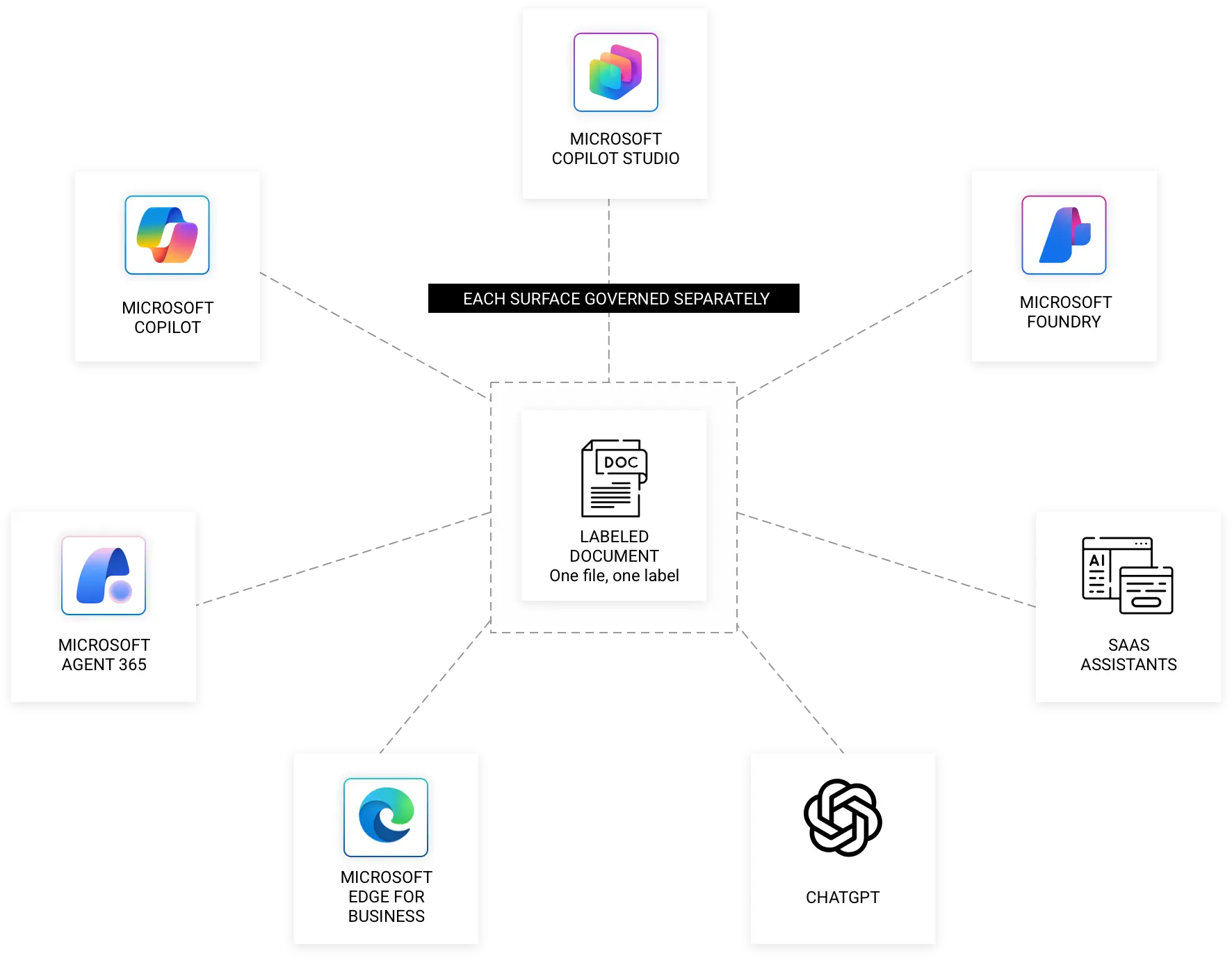

Access controls tell you who can open a file. Sensitivity labels tell you how it is protected at rest and in transit. Neither answers whether the document should ever be retrieved by Microsoft 365 Copilot, grounded into a Microsoft Copilot Studio agent, indexed by a Microsoft Foundry workload, or pasted into ChatGPT.

That gap is the real story behind every risk article published in the last twelve months.